CoPilot Interactions and eDiscovery

Microsoft treats them like Teams Chat - do your eDiscovery processes have that covered?

This issue is out of sync with the regular schedule around here, but that’s OK because I realized last week that my schedule had gotten off somewhere. I usually try to do a deep dive for paid subscribers every two weeks, and then I have a monthly news collection for everyone in the third week, and I take off the first week.

I think one of those five-Monday months got me screwed up, and I’ve been sending deep dives in back-to-back weeks after the news roundup, which is difficult because those deep dives take a lot of time and effort.

This week, I’m leaving this one open to the public because I want everyone to have a chance to contribute comments and to get myself back in sync.

The topic is also timely, thanks to a LinkedIn post by Kassi Burns, where she learned about how Copilot interactions are stored in Exchange.

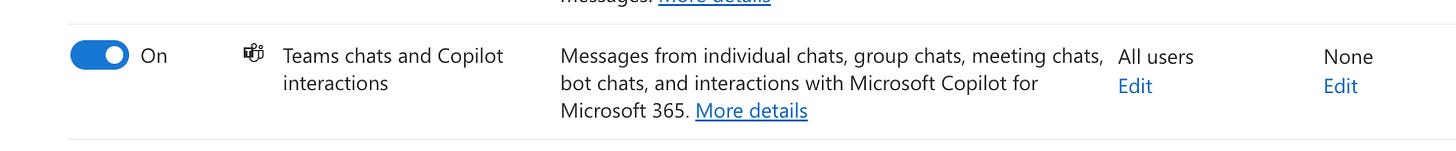

As I commented on her post, I first became aware of this when following some links to this Microsoft documentation, where I first realized that Purview Retention policies refer to “Teams chat and Copilot interactions” as one type of item when setting up a retention policy, as you see in the screenshot below:

I can’t do a deep dive into this due to various licensing I don’t have with my developer tenant. Still, it’s clear that the Copilot prompts and interactions are stored in the Exchange substrate and are available to the eDiscovery tools.

This was confirmed for me as I dug further into the documentation - https://learn.microsoft.com/en-us/purview/retention-policies-copilot#how-retention-works-with-microsoft-copilot-for-microsoft-365

Also, when searching by Type in eDiscovery Premium:

The question then becomes, is it relevant? I’m not a lawyer, so I’m going to ask others for their insight. I can imagine some scenarios where it would be relevant for eDiscovery. More importantly, I can imagine plenty of reasons to investigate prompts internally and to notify users that their Copilot prompts are not private.

At the very least, organizations should discuss the retention of Copilot interactions. (I’d like to think you’ve already had that conversation about Teams chat—you may need to revise it now that the setting applies to Copilot as well.)

As Kassi mentioned, if you adopt Copilot widely in your environment, you’ll need to update some custodian interview questions and ensure you’ve covered those interactions as part of the eDiscovery process.

What else should we consider when it comes to Copilot prompts?

Are you actively Auditing and including Copilot information in your eDiscovery plan? If you have users active with other AI tools, like ChatGPT, are you including those in your eDiscovery and Compliance processes? Has anyone been involved in an investigation or litigation where AI interactions came into play?